ADDIE Isn’t Dead—You’re Just Using It Wrong

Why this 50-Year-Old Framework Still Matters in the Age of AI

In recent years, ADDIE has become an easy target. It is often described as outdated, slow, or incompatible with the pace of modern business. Agile methods, rapid design models, and AI-powered tools are frequently positioned as replacements, praised for speed and flexibility. Yet this framing misses the point entirely. ADDIE was never designed to compete with speed. It was designed to ensure direction. When organizations struggle with ADDIE, the issue is rarely the framework itself. More often, it is how the framework has been applied—or misunderstood.

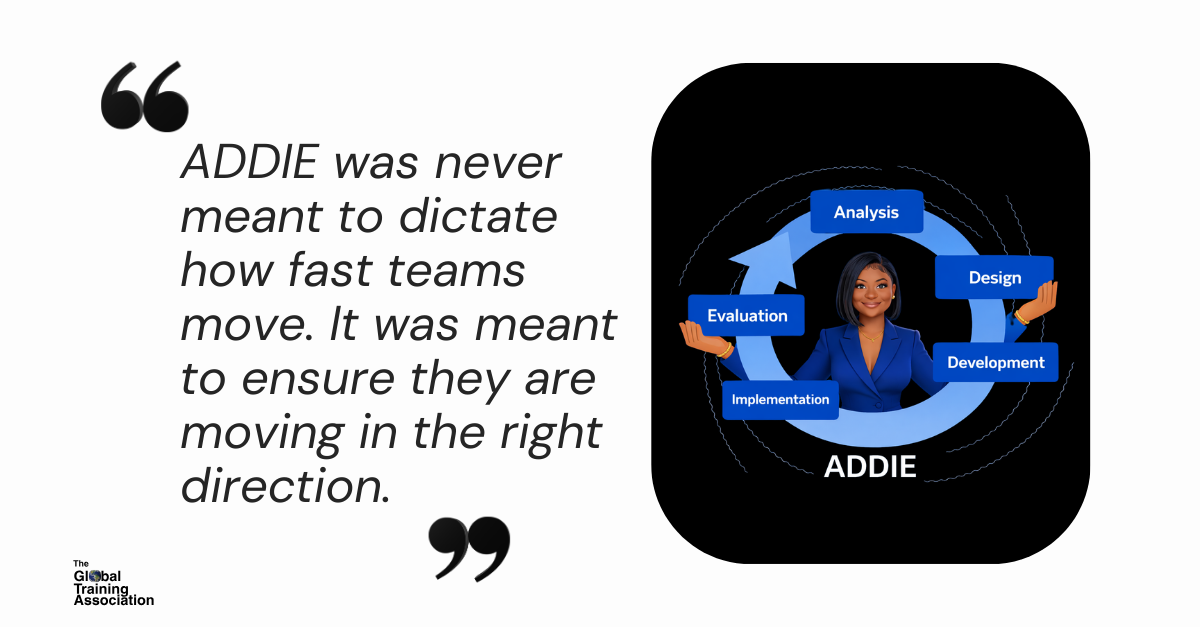

“ADDIE was never meant to dictate how fast teams move. It was meant to ensure they are moving in the right direction.” — Jakaria Ross

At its core, ADDIE stands for Analysis, Design, Development, Implementation, and Evaluation. These phases are not linear steps to be checked off, nor are they relics of a slower era. They represent the essential questions organizations must answer before investing time, budget, and credibility into training. Analysis clarifies the performance problem. Design defines how learning will influence decisions and behavior. Development brings the solution to life. Implementation ensures adoption and reinforcement. Evaluation connects learning back to performance.

ADDIE remains especially relevant in 2026 because organizations are moving faster than ever. AI has dramatically reduced the time required to generate content, simulations, and assessments. What AI has not reduced is the cost of solving the wrong problem. In fact, AI increases that risk by allowing teams to scale misalignment more quickly. In this environment, ADDIE functions as a governance framework—one that ensures speed does not come at the expense of clarity.

“In the AI age, the risk is no longer slow delivery. The risk is fast misalignment.” — Jakaria Ross

Much of the criticism directed at ADDIE stems from its misuse. In many organizations, analysis is reduced to assumptions, design is rushed or skipped, and evaluation is limited to completion metrics. When outcomes disappoint, structure is blamed instead of execution. High-performing organizations take a different approach. They modernize ADDIE by treating it as a strategic thinking model rather than a procedural constraint.

This distinction became clear during a recent client engagement, where subject-matter experts were unsure which instructional method to use. Some advocated for agile sprints, others for rapid prototyping, while others pushed for a more traditional approach. The debate stalled progress. Rather than choosing a method prematurely, the project returned to ADDIE—not as a delivery model, but as a decision lens.

“When teams disagree on methods, it is often a signal that the problem itself has not yet been clearly defined.” — Jakaria Ross

Analysis reframed the conversation by identifying the actual performance gap and the decisions employees were struggling to make on the job. Design then aligned stakeholders on how learning would support those decisions, including modality, practice opportunities, reinforcement mechanisms, and success measures. Only after that alignment was established did the team determine how to move quickly. Agile development techniques and AI tools were incorporated, but they were guided by design rather than driving it. The result was faster delivery, reduced rework, and clearer ownership across leaders and SMEs.

Using ADDIE effectively in the AI age requires a shift in emphasis, not abandonment. Analysis becomes shorter but sharper, focusing on business impact rather than documentation. Design becomes even more critical, as it directs how AI-generated content is structured, contextualized, and applied. Development accelerates through AI-assisted authoring, simulations, and assessments, but only produces value when grounded in strong design. Implementation expands beyond launch to include manager enablement, system alignment, and reinforcement strategies. Evaluation moves beyond satisfaction and completion data to examine decision quality, behavior change, and performance outcomes.

“ADDIE does not compete with AI or agile methods. It provides the structure that allows them to work responsibly at scale.” — Jakaria Ross

The enduring value of ADDIE is not that it slows organizations down, but that it prevents confusion, misalignment, and wasted effort. In a period defined by constant change, leaders still need frameworks that create consistency without rigidity. ADDIE continues to do exactly that—when it is treated as a strategic discipline rather than an instructional relic.

References

Branch, R. M. (2009). Instructional design: The ADDIE approach. Springer.

Brinkerhoff, R. O. (2006). Telling training’s story: Evaluation made simple, credible, and effective. Berrett-Koehler.

Clark, R. C., & Mayer, R. E. (2016). E-learning and the science of instruction (4th ed.). Wiley.

Kirkpatrick, D. L., & Kirkpatrick, J. D. (2006). Evaluating training programs: The four levels (3rd ed.). Berrett-Koehler.

Salas, E., Tannenbaum, S. I., Kraiger, K., & Smith-Jentsch, K. A. (2012). The science of training and development in organizations. Psychological Science in the Public Interest, 13(2), 74–101.